The essential prep you need to know for readying your rig for an Nvidia RTX 3080, 3090, or 3070.

How to prepare your PC for the Nvidia GeForce RTX 30-series

The Nvidia GeForce RTX 30-series graphics processing units (GPUs) are finally out in the wild. Well, the Nvidia GeForce RTX 3080 is, in first-party (Founders Edition) and third-party form, and the Nvidia GeForce RTX 3090 and Nvidia GeForce RTX 3070 aren’t far behind, either.

If you’re tempted to buy one – whether you’re upgrading from an Nvidia GTX 10-series, 20-series, or an AMD graphics card – consider this page your go-to guide for the essential info you need to know in prepping your system for 30-series readiness.

What is Nvidia’s GeForce RTX 30 Series?

It’s the latest graphics card series from Nvidia, and one that builds on the ray tracing (via RTX Cores) and AI upscaling (via Tensor cores) of the preceding 20-series GPUs. There are three GPUs that are part of the initial launch: the GeForce RTX 3080, 3090, and 3070.

Here’s how the core information and specs for the 3080, 3090, and 3070 stack up in comparison to each other.

Where to buy Nvidia GeForce RTX 30 Series GPUs in Australia

With no pre-order options for the Nvidia 30-series GPUs, outside of a raffle for the Founders Editions of the three graphics cards on Mwave (with later-than-launch shipping dates), it’s best to keep an eye on your preferred online retailers and sign up for their respective mailing lists to ensure you don’t miss out at launch.

Using ‘RTX 3080’ (‘RTX 3090’ or ‘RTX 3070’, depending on your GPU preference) as a search term on PC price-comparison site Static Ice is a great place to start once they become available, otherwise keeping an eye on go-to online retailers like aforementioned Mwave, Scorptec, Amazon Australia, or Newegg.

There’s no way of telling at this stage whether demand will outstrip supply for the Nvidia 30-series GPUs, but it’s absolutely worth waiting for reviews to go online (3080 reviews are already out in the wild) to see how closely the real-world testing results pair with the Nvidia gains claims.

It’s worth noting that unlike the Titan GPUs, which were only sold directly by Nvidia, the RTX 3090 GPU will be available to purchase from third-party retailers.

Should I buy the RTX 3070, 3080, or 3090?

Some of this boils down to personal preference, but there is a clear logic to the pricing and performance of Nvidia’s 30-series GPUs. If you want a good boost for frames and fidelity for a graphics card that’s not an RTX 2080 Ti or RTX Titan equivalent, you can save bucks by buying the RTX 3070. Nvidia is saying it’s slightly faster than a 2080 Ti.

The mid-range option for the trio of 30-series GPUs is the 3080, which Nvidia described as the “perfect [graphics] card for gamers” in a recent media briefing. It’s the starting point for the new GDDR6X RAM, which is the fastest in the world, and it houses more Nvidia CUDA cores, has more memory, and has a higher memory interface width. Translation: it should have a measurable performance boost and, according to Nvidia, is up to twice as fast as an RTX 2080 card.

If you want to go all out, the RTX 3090 GPU is the most expensive but also boasts the most performance of the lot. It has the most Nvidia CUDA cores, 2.4 times the memory of the 3080, and the highest memory interface width of the three 30-series GPUs. In a recent media briefing, Nvidia said this GPU is intended for scientists and gamers who want to play games in 8K resolution.

Nvidia speed disclaimers

In that same recent media briefing, Nvidia clarified that percentage gains in performance differ on a per-game basis. While an individual game’s optimisation will determine the kind of max frame rates that can be eked from it, you should safely expect all of the 30-series GPUs to outperform previous generations of Nvidia cards. How much that percentage gain is exactly will come from the in-depth reviews, and those should be used to determine the cost-to-frames benefit of upgrading to a 30-series GPU.

Nvidia GeForce RTX 30 Series space considerations

The length and height of the Nvidia 30-series GPUs is your first hurdle to tackle when considering an upgrade. Compared to the Nvidia GeForce GTX 1070 and RTX 2070, the RTX 3070 is 24mm shorter than the 1070 but 13mm longer than the 2070. All are two-slot GPUs, which means they cover two PCI-express ports inside a desktop PC’s case and use up two ports on the back of a desktop case.

In terms of comparing the lengths of the RTX 3080 with the GTX 1070 and RTX 2070, both of those older Nvidia graphics cards have identical lengths that are 18mm shorter than the 285mm-long 3080. All of these graphics cards are two-slot GPUs, which means there shouldn’t be any issues with height concerns for those upgrading.

The RTX 3090 is where things start to get hefty. While the GTX 1080 Ti, RTX 2080 Ti, and Titan RTX are all 267mm long, the RTX 3090 is a noticeable 46mm longer at 313mm. This length alone may rule out compact desktop PC cases. More importantly, the RTX 3090 is also a three-slot GPU, which means it will cover three PCI-express slots: the one it’s connected to, as well as the two below it.

It’s worth noting that the RTX 3090 is the only SLI-capable 30-series GPU – which lets you link multiple 3090 GPUs together in a single PC – but you would feasibly have to consider an SLI configuration taking up six slots instead of three. Whatever the dimensions of your next GPU purchase, measure the inside of your desktop PC’s case if you’re concerned.

Nvidia GeForce RTX 30 Series power considerations

The wattage of your PC’s power supply unit (PSU) will also be a key consideration in determining the compatibility of your current desktop for an Nvidia GeForce RTX 30-series GPU upgrade. What used to be a 500W recommendation for the GTX 1070 and a 550W recommendation for the RTX 2070, is now 650W for the RTX 3070.

It’s a bigger jump for the RTX 3080 and 3090. While a 500W PSU was good enough for the GTX 1080 and a 650W was sufficient for the RTX 2080, you’ll want at least a 750W PSU for the RTX 3080. That same 750W PSU is recommended for the RTX 3090, compared to the shared 650W recommendation for the Titan RTX and RTX 2080 Ti, and the 600W recommendation for the GTX 1080 Ti.

All of the 30-series GPUs are powered by 8-pin PCI-express power connectors, with only one required for the RTX 3070 but two apiece needed for the RTX 3080 and RTX 3090. Nvidia has said its Founders Edition GPUs will include an 8-pin-to-12-pin adaptor for the 3080 and 3090 cards in the box, so as long as your PC has two 8-pin PCI-express power connectors to spare, you’re good to go.

If you opt to use SLI to connect two RTX 3090 GPUs, factor in an additional 350W PSU requirement to accommodate the second graphics card.

Nvidia GeForce RTX 30 Series cooling considerations

The heat differences between Nvidia series generations is, at coolest, improved and, at hottest, negligible. For instance, the RTX 3070 has a max GPU temperature of 93°C, with the RTX 2070 lower at 89°C, but the GTX 1070 higher at 94°C.

It’s a similar story for the RTX 3080, which has a 93°C max GPU temperature, compared to 88°C for the RTX 2080 and 94°C for the GTX 1080. The RTX 3090 has the same 93°C max GPU temperature as the RTX 3080, compared with the shared 89°C for the Titan RTX and RTX 2080 Ti, and the 91°C for the GTX 1080 Ti.

Both the first-party Founders Editions of the 30-series GPUs and the majority of third-party versions have an emphasis on cooling improvements so, on paper, no additional cooling should be required for an existing or new desktop build.

Nvidia GeForce RTX 30 Series mouse and monitor considerations

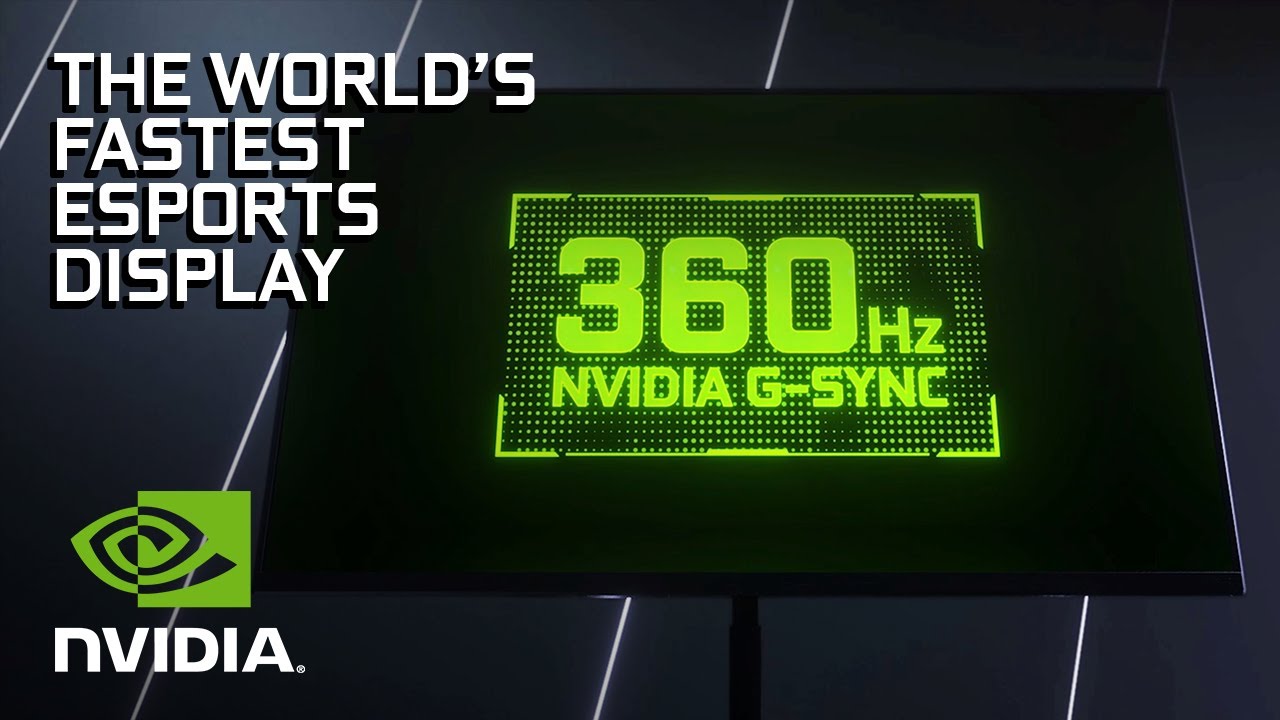

You may want to invest in a new monitor and even a new gaming mouse to complement your new 30-series GPU. The Nvidia 30-series GPUs are set to benefit from Nvidia Reflex, which is a mix of GPU refinements, G-Sync display improvements, and software technologies all designed to reduce click-to-display latency. This feature is targeted primarily at esports players and those seeking a competitive edge in online multiplayer games that are built around rewarding speedy reactions.

Ultimately, Nvidia Reflex is built as a way to lower system latency and, given the power of the 30-series GPUs, certain monitor manufacturers are rolling out screens that have refresh rates of up to 360Hz. Compatible gaming monitors will also include Reflex Latency Analyser, which lets you connect a supported gaming mouse to a screen’s USB port so you can see the numbers on system latency.

Reflex Latency Analyser gaming monitors

There are currently four monitors that will support this feature:

- Asus ROG Swift 360Hz PG259QNR

- Acer Predator X25

- MSI Oculux NXG253R

- Alienware 25 Gaming Monitor AW2521H

Reflex Latency Analyser gaming mice

These are the four gaming mice with firmware updates that let them take advantage of the Reflex Latency Analyser:

- Asus ROG Chakram Core

- Logitech G Pro Wireless

- Razer DeathAdder v2

- SteelSeries Rival 3

Nvidia GeForce RTX 30 Series driver considerations

By installing Nvidia’s GeForce Experience software, you can be notified every time new Game Ready Drivers drop for any support Nvidia GPU. This is also true of the 30-series graphics cards, which should see performance improvements and game-specific optimisations with each driver update. Open GeForce Experience and click on the ‘Drivers’ tab, then ‘Check for updates’ to see if you have the latest version.

GeForce Experience defaults to Game Ready Drivers, but you can tick the three vertical dots next to ‘Check for updates’ and shift it to ‘Studio Driver’ if your GPU is primarily used for creative apps. If you’re upgrading from an Nvidia GPU, you don’t need to install new drivers. If, however, you’re upgrading from an AMD GPU, it’s best to uninstall the graphics card drivers and perform a clean installation of Nvidia drivers via GeForce Experience.

Related Articles