I don’t know if 2024 will go down as the year of AI but it certainly feels like the year it became impossible to escape it.

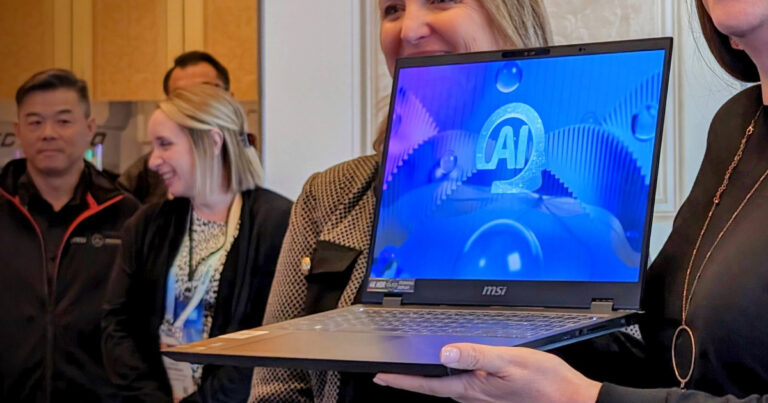

CES 2024 was dominated by AI tech and Computex 2024 didn’t deviate from that trend. Taipei’s biggest technology tradeshow was filled with folks looking to shill their AI-powered wares like a hot dog vendor at a baseball game.

If you’re looking to get your head around the many new laptops (which are being billed as AI PCs) announced at the tradeshow, TOPS is a key term you’ll want to understand. Manufacturers have moved towards adopting the term earlier this year and started using it as a go-to benchmark for AI PC and NPU performance.

For those unfamiliar, an NPU is a neural processing unit. It’s a specific part of a PC that’s designed to handle AI-related workloads in the same way that a GPU is built to handle stuff like games and 3D graphics. While most older PCs don’t have a built-in NPU, many of the latest AMD, Intel and Qualcomm processors do feature one.

Some NPUs are better than others but assuming that they outlast this current wave of hype around AI technologies, the PC market will eventually reach a place where their presence becomes as ubiquitous as a CPU or GPU. Given that, the conversation has begun to shift from whether or not a PC has an NPU to what that hardware can do. As mentioned above, not all NPUs are born equal.

Enter TOPS. This is an easy shorthand for Trillions of Operations Per Second or tera operations per second. The higher it is, the faster a given PC can process AI-related tasks and applications. The faster a PC does anything, the more time you’re “saving” by using it. Even if AI is being marketed as the next big thing, the underlying logic here isn’t nearly so much as a departure from traditional PC speak than the marketing might have you believe.

TOPS is also being used as a way to distinguish between different sub-segments of the AI PC landscape.

While most PCs are capable of running AI applications without an NPU, an AI PC with dedicated AI hardware can run them much better. Hence, the rise of the term AI PCs. You’ve also got Microsoft’s new Copilot Plus PC branding, which covers any AI PC with at least 40 TOPS of performance.

Even if all this largely boils down to marketing, there’s still some utility here for consumers. Understanding how the brands looking to sell you your next PC are thinking about the category writ large helps you to understand what features and performance will suit your needs.

Of course, the thing about anything that’s measured in trillions is that most people aren’t equipped to grapple with numbers of that magnitude, let alone ones that relate to computational workloads. That’s literally the problem that computers exist to solve but while the technology industry is already talking TOPS, you have to wonder whether the term means anything to those who aren’t PC enthusiasts or AI advocates.

Speaking to Reviews.org, vice president and GM for Intel’s client AI and technical marketing division Robert Hallock laid out his rationale for TOPS as an AI metric in fairly digestible terms.

“Every device needs a spec,” he explained.